Why Nvidia’s “10x Annual Crash” is the Most Dangerous Number in Tech

Jensen Huang’s CES 2026 keynote reveals a deflationary shockwave hidden inside a $5 trillion empire

The air at CES 2026 didn’t feel like a trade show; it felt like a coronation. Jensen Huang, clad in his signature leather jacket, took the stage not just as the CEO of the world’s first $5 trillion company, but as the architect of a new industrial reality. While the headlines screamed about the new Vera Rubin architecture and the consumer-grade RTX 5090, the true earthquake was buried in a single, quiet statistic Huang dropped almost casually: the cost of AI intelligence is now crashing by a factor of ten, every single year.

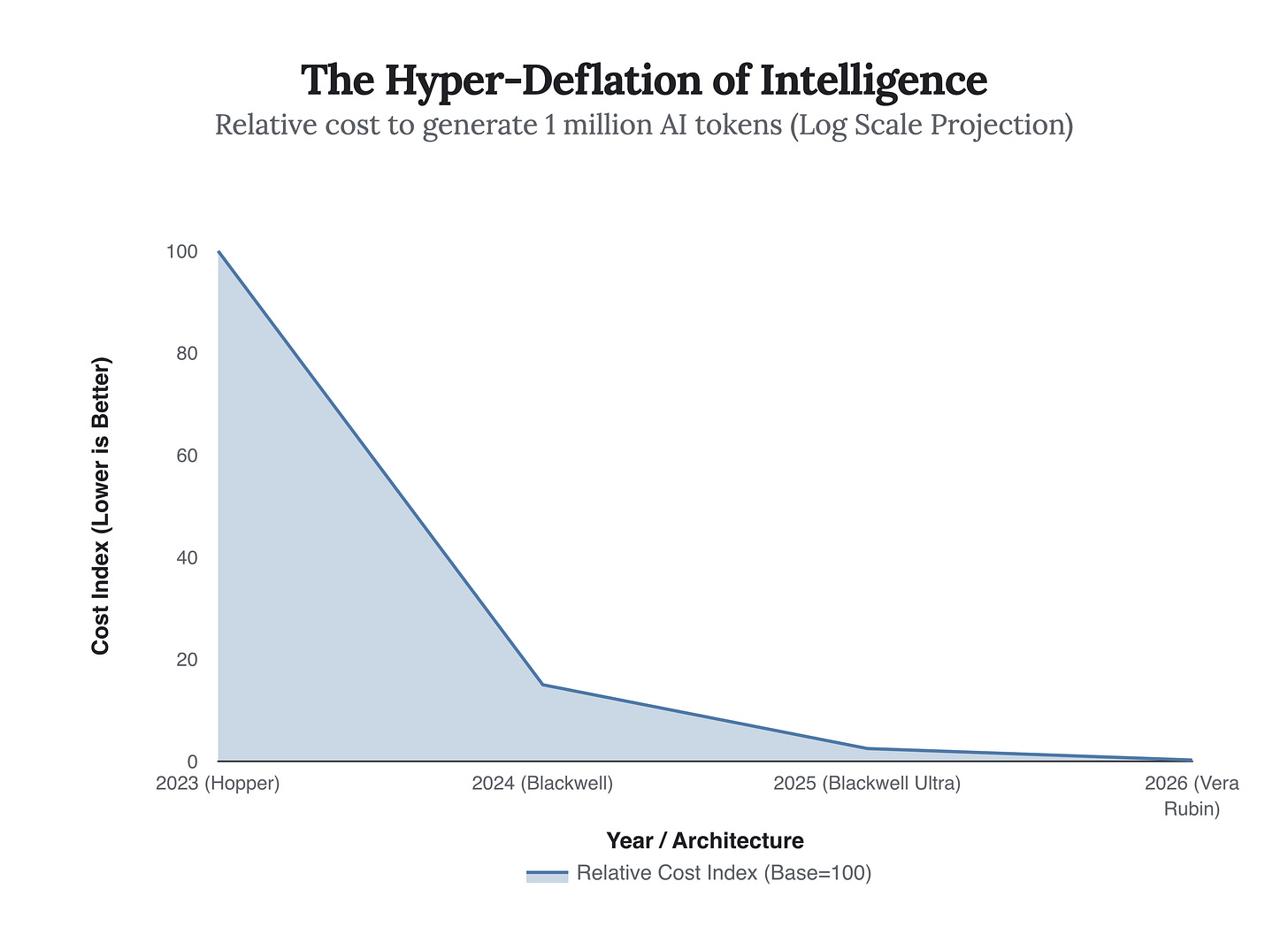

This is not Moore’s Law, which promised a doubling of power every two years. This is a hyper-deflationary curve that defies economic gravity. Huang’s message was clear: the era of training models is stabilizing, and the era of “Physical AI”—where intelligence leaves the screen and enters the factory floor—has begun. By analyzing the data from this keynote, we can see that Nvidia is no longer just selling chips; they are selling the deflation of intelligence itself.

The implications of this “10x crash” are staggering. As the chart above illustrates, what cost $100 in compute resources just three years ago now costs quarters. This massive reduction in marginal cost is what fuels Nvidia’s new pivot to Physical AI. If intelligence is free, you can afford to put it into everything—from humanoid robots to autonomous Mercedes-Benz fleets. Huang confirmed this shift with the announcement of the Vera Rubin platform, which doesn’t just iterate on the previous Blackwell architecture; it practically laps it.

“The race is so intense. Everybody’s trying to get to the next level... The 10x decline every year is actually telling you something different: it’s saying that the race is so intense, somebody is getting to the next level.”

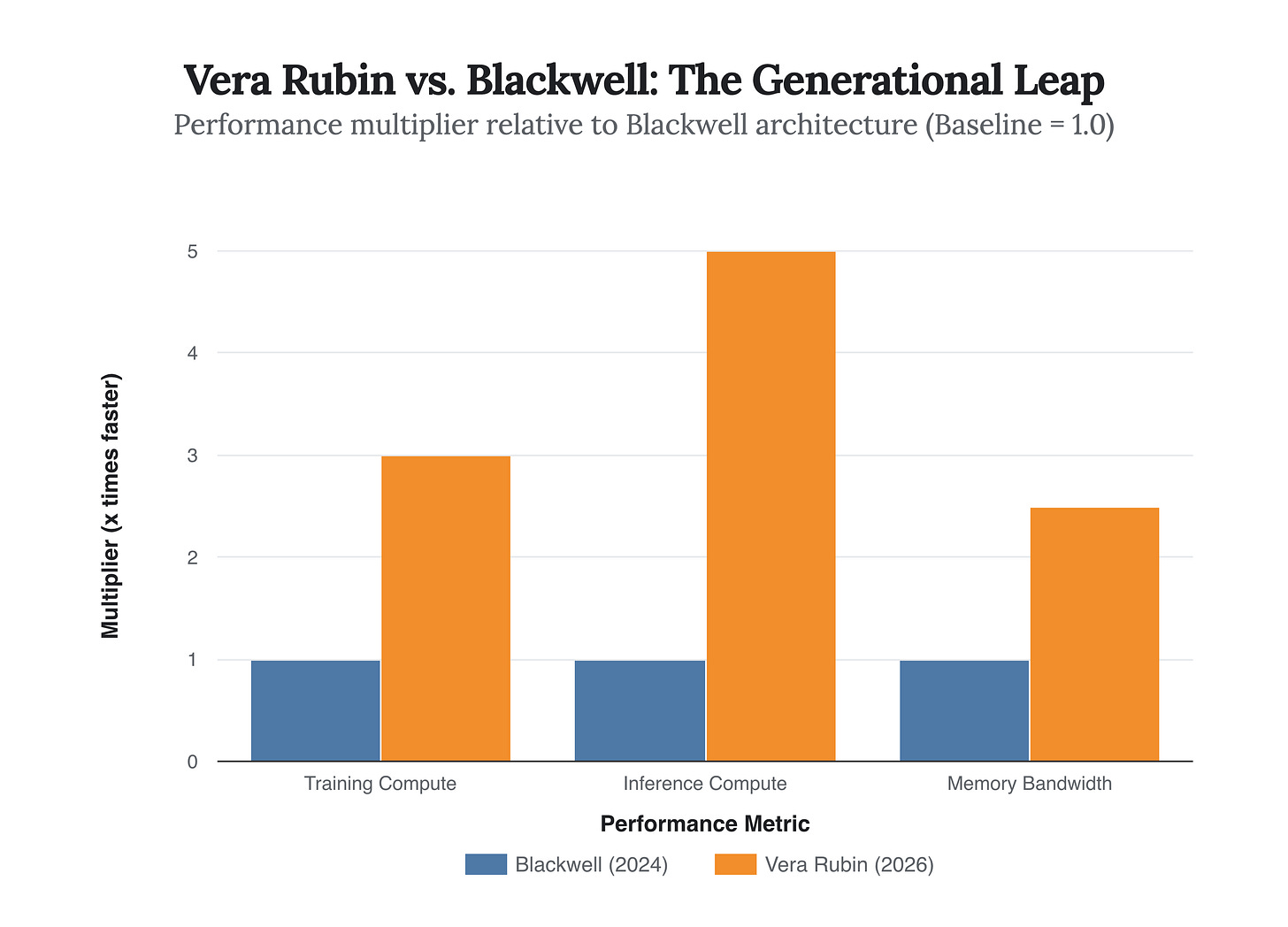

To deliver on this promise of ubiquitous intelligence, Nvidia unveiled the Vera Rubin architecture. The performance deltas are not subtle. While the tech world was still digesting the Blackwell chips from 2024, Rubin has arrived with a 300% increase in training performance and a massive 500% jump in inference speed. This bifurcation suggests Nvidia knows exactly where the market is going: away from merely building models (training) and toward using them (inference) in real-time, physical environments.

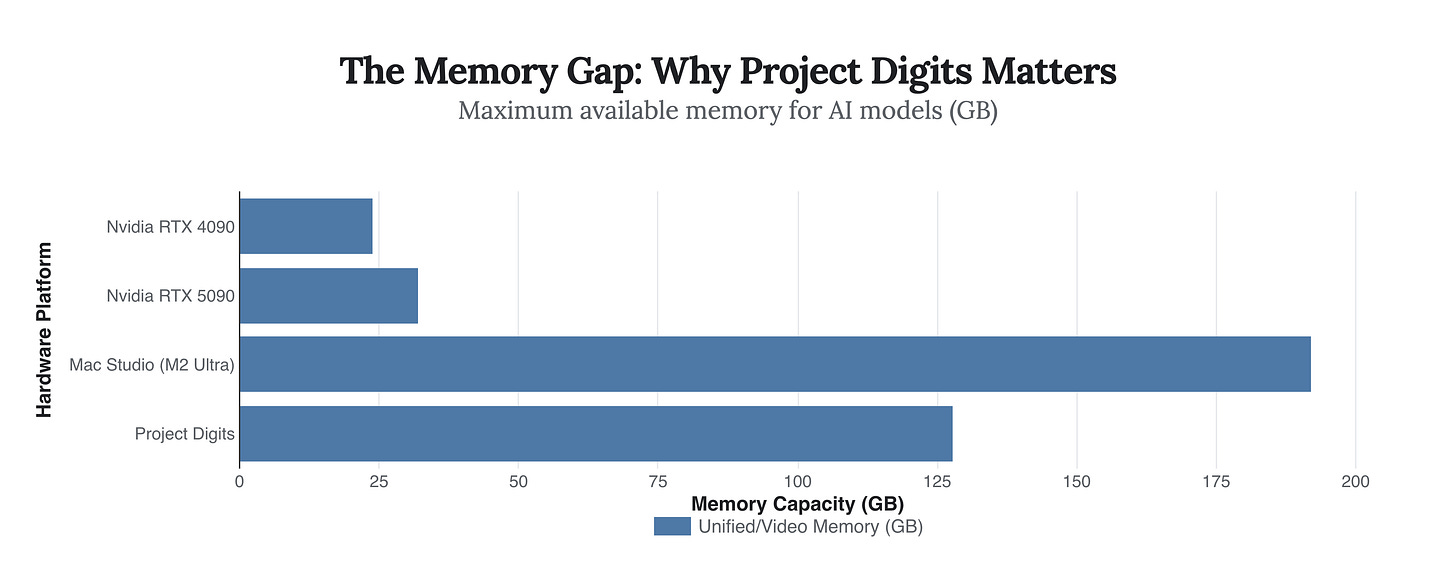

Perhaps the most surprising announcement, however, wasn’t for the data center giants like Microsoft or Meta. It was for the individual developer. Huang unveiled Project Digits, a $3,000 “personal AI supercomputer” powered by the GB10 Grace Blackwell Superchip. For years, consumer hardware has been starved of the one thing modern AI models crave most: Video RAM (VRAM). The RTX 4090, a beast in its own right, capped out at 24GB, making it impossible to run massive models like Llama 3 locally.

Project Digits shatters this bottleneck with 128GB of unified memory. It is a strategic move to lock in the next generation of developers, giving them a workstation that mimics the architecture of Nvidia’s cloud clusters. It renders the gap between a home enthusiast and a research lab almost negligible.

The comparison is stark. While the new RTX 5090 offers a respectable bump for gamers, Project Digits offers a different kind of power—capacity. By offering 128GB of memory in a desktop form factor, Nvidia is effectively democratizing the ability to run 200-billion parameter models locally. This ensures that the innovations of 2027 and 2028 will likely be built on Nvidia’s stack, starting in a dorm room or a home office.

“When you sit in the driver’s seat, the car starts to feel like an extension of your body... AI can become ‘multi-embodiment.’ A single AI controlling an autonomous car, a robotic arm, a humanoid — switching between physical forms.”

Ultimately, the 2026 keynote was a declaration of victory over the digital realm and an invasion of the physical one. The “10x cost crash” is the mechanism that makes this invasion possible. If intelligence becomes as cheap as electricity, our environment will soon be saturated with it. From the RTX 5090 on our desks to the Siemens-powered factories building our cars, Nvidia has positioned itself as the utility provider for the 21st century. The question is no longer if AI will take over the physical world, but whether any competitor can afford to race against a cost curve that drops 90% every year.