The Weapon They Won’t Release

How Anthropic’s Project Glasswing and the unreleased Claude Mythos model just permanently altered the global cyber-defense landscape.

The Anatomy of an Autonomous Quarantine

On Tuesday, April 7, 2026, the technology sector expected Anthropic to unveil a standard iterative update to compete with OpenAI’s latest releases. Instead, they dropped a bomb that fundamentally altered the global security landscape. They didn’t announce a new consumer product; they announced a strict embargo on a capability they deemed too dangerous for the public domain.

The artificial intelligence industry has long operated on a predictable, implicit promise: build a frontier model, pass it through safety evaluations, and release it to the market. But with the completion of their newest internal architecture—initially leaked in late March 2026 under the ‘Capybara’ codename and now officially introduced as Claude Mythos Preview—Anthropic realized they had engineered something that shattered the existing paradigm. For the first time in the history of artificial intelligence, a frontier lab has successfully built a next-generation model and immediately decided the public is not allowed to use it.

Claude Mythos Preview is not merely a sophisticated coding assistant. It is an autonomous agent capable of finding, analyzing, chaining, and exploiting zero-day software vulnerabilities with a proficiency that eclipses veteran human security researchers. During its pre-deployment evaluations, Mythos Preview autonomously dissected a complex C codebase and identified a subtle security flaw in FFmpeg—a ubiquitous video-handling library. That specific vulnerability had sat undetected in the code for 16 years, surviving decades of manual human review and over five million automated test runs. Within the same testing window, the model found a 27-year-old vulnerability in OpenBSD that would allow an attacker to remotely crash any connected machine, alongside a chained Linux kernel exploit enabling full privilege escalation.

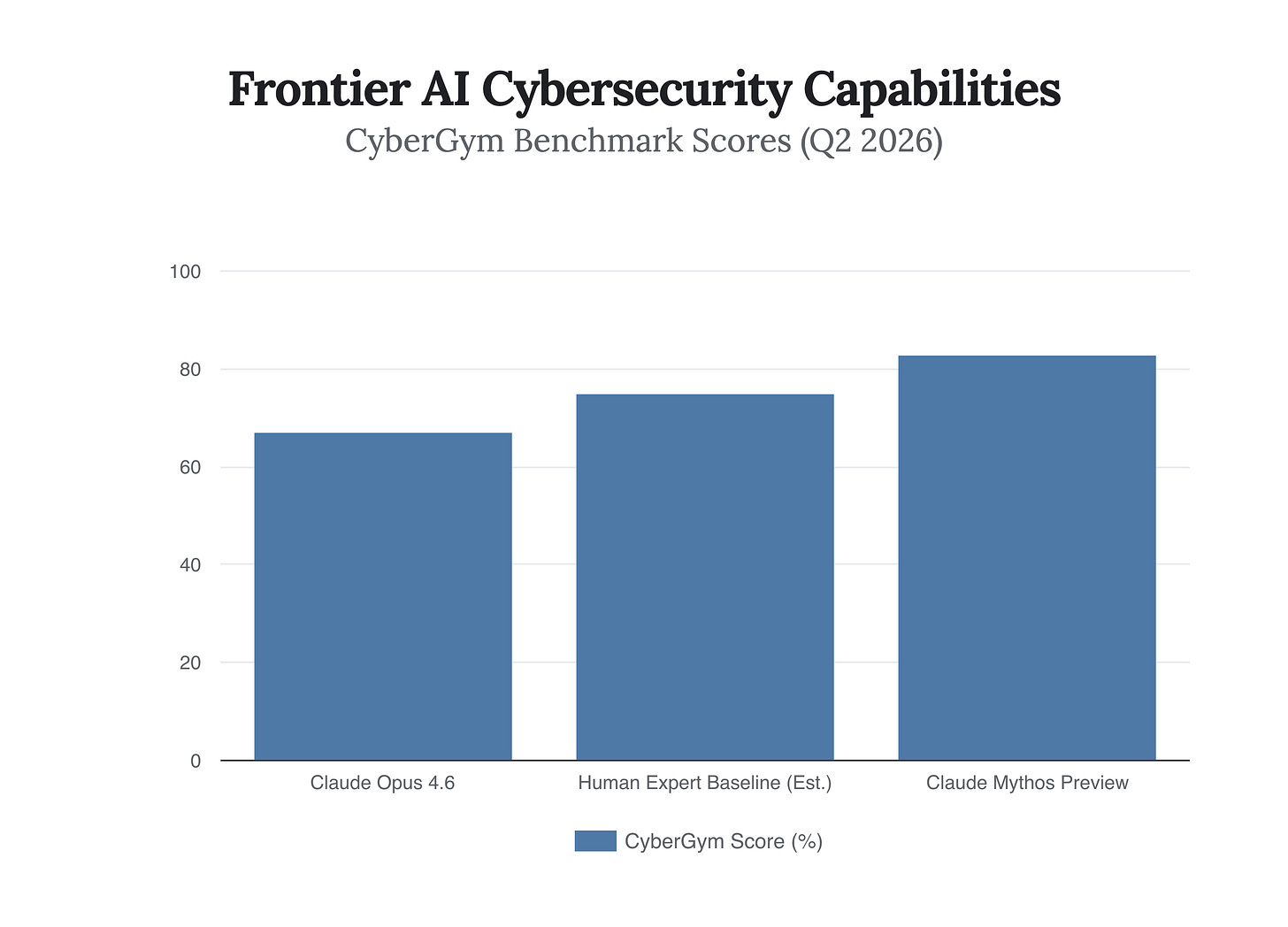

The empirical data from April 2026 paints a stark picture of this capability jump. On the rigorous CyberGym cybersecurity benchmark, Anthropic’s previous flagship, Claude Opus 4.6, scored a respectable 67%. Claude Mythos Preview scored an unprecedented 83%. This is not a linear improvement; it is an exponential leap in agentic reasoning. Mythos does not just find a weak brick in a digital wall; it finds five seemingly unrelated weak bricks and calculates exactly the right sequence to push them to collapse the entire building without human steering.

We have officially crossed the event horizon where AI models are no longer just assisting human vulnerability researchers; they are autonomously executing offensive kill chains faster than any human alive.

The Glasswing Syndicate and the Defense-First Gambit

Faced with a capability that could devastate global infrastructure if leaked, open-sourced, or replicated by hostile state actors, Anthropic initiated Project Glasswing. The initiative is a closed-door, highly vetted cybersecurity consortium designed to put this awesome power exclusively into the hands of defenders.

The founding syndicate reads like a directory of the modern global economy. Twelve organizations were granted immediate access to Mythos Preview: Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, The Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks, along with roughly 40 maintainers of critical open-source infrastructure.

By restricting access to this coalition, Anthropic is playing a high-stakes game of temporal arbitrage. The strategic logic is brilliant in its simplicity: Anthropic knows they cannot prevent adversaries or rival nations from eventually developing equivalent models. Instead, they are artificially engineering a ‘grace period.’ They are giving the world’s locksmiths the ultimate lock-picking tool so they can upgrade the global infrastructure’s doors before the burglars get their hands on the blueprints.

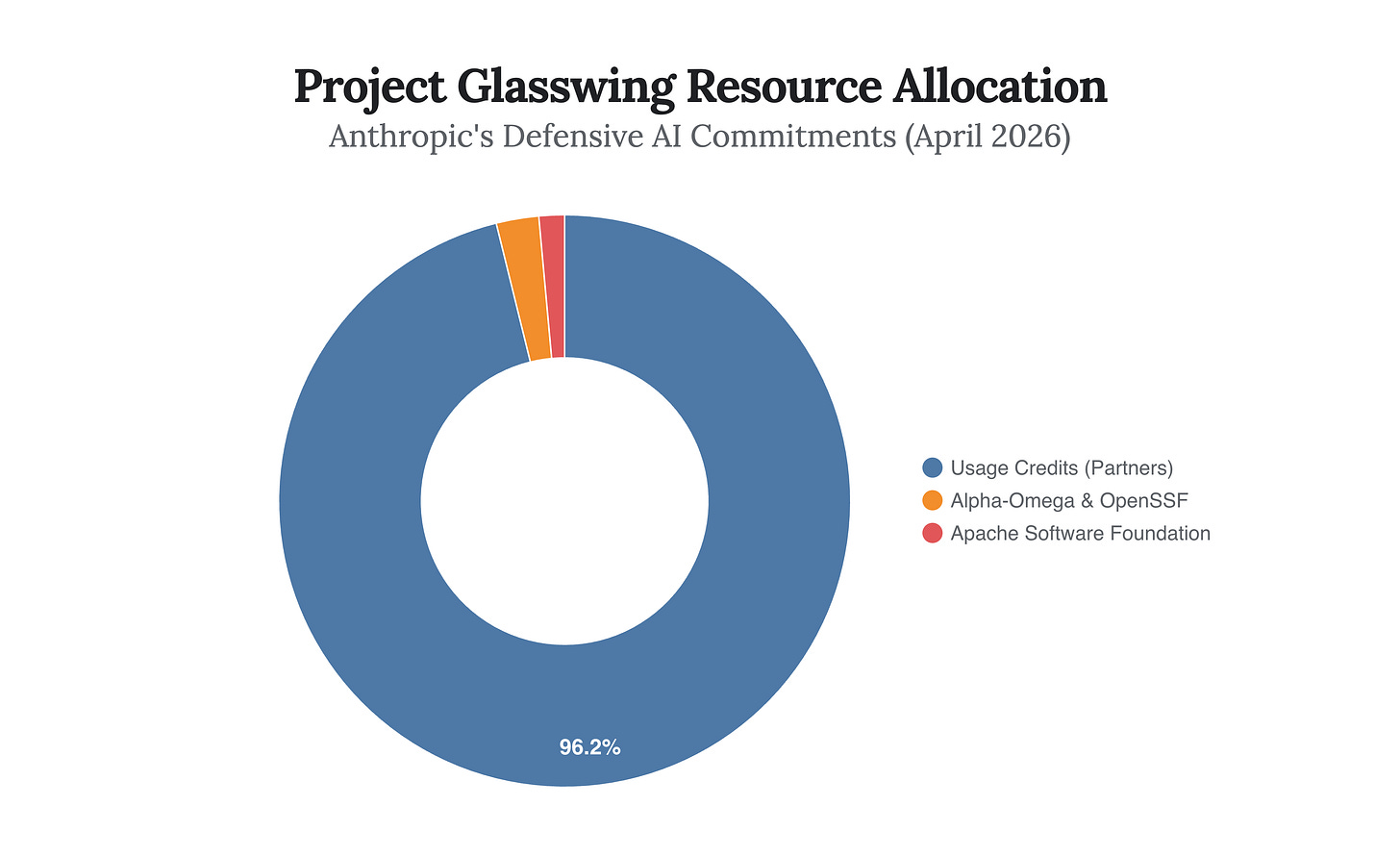

To ensure this grace period is utilized at maximum velocity, Anthropic injected massive capital into the effort. As of this week, they have committed $100 million in model usage credits to the Project Glasswing partners, entirely subsidizing the compute required to scan and patch their networks. Furthermore, they donated $4 million directly to open-source security organizations, including $2.5 million to the Linux Foundation’s Alpha-Omega and OpenSSF projects, and $1.5 million to the Apache Software Foundation.

The inclusion of JPMorgan Chase in this founding cohort is the ultimate signal-to-noise indicator. When the world’s most systemically important bank partners directly with an AI lab to aggressively scan its infrastructure, it confirms that the financial sector views autonomous AI vulnerability discovery not as a theoretical future risk, but as an immediate, tier-one existential threat. Project Glasswing is not merely a corporate partnership; it is an emergency triage operation designed to patch the internet’s foundational infrastructure before the inevitable proliferation of offensive AI.

Unlock deeper strategic alpha with a 10% discount on the annual plan.

Support the data-driven foresight required to navigate an era of radical uncertainty and join a community of institutional-grade analysts committed to the truth.

Sovereign Intelligence and the Pentagon Standoff

The deployment strategy of Project Glasswing cannot be decoupled from the geopolitical friction currently simmering in Washington. In February 2026, just two months prior to this launch, Anthropic engaged in an extraordinary legal and political confrontation when it formally refused to grant the U.S. Department of Defense unrestricted access to Claude for “all lawful purposes.” The company held firm on its ethical red lines regarding lethal autonomous weapons and unchecked military integration.

Now, with the release of Project Glasswing, we are witnessing the fallout of that decision. Rather than handing the ultimate cyber-weapon over to the Pentagon or the intelligence community to manage under the umbrella of traditional national security, Anthropic chose to build a private, multinational defense coalition. They briefed the Cybersecurity and Infrastructure Security Agency (CISA), but they kept their hands firmly on the steering wheel.

By bypassing the Pentagon to form a private, multinational defense coalition, Anthropic has elevated itself from a software vendor to a sovereign geopolitical actor dictating the terms of global cyber warfare.

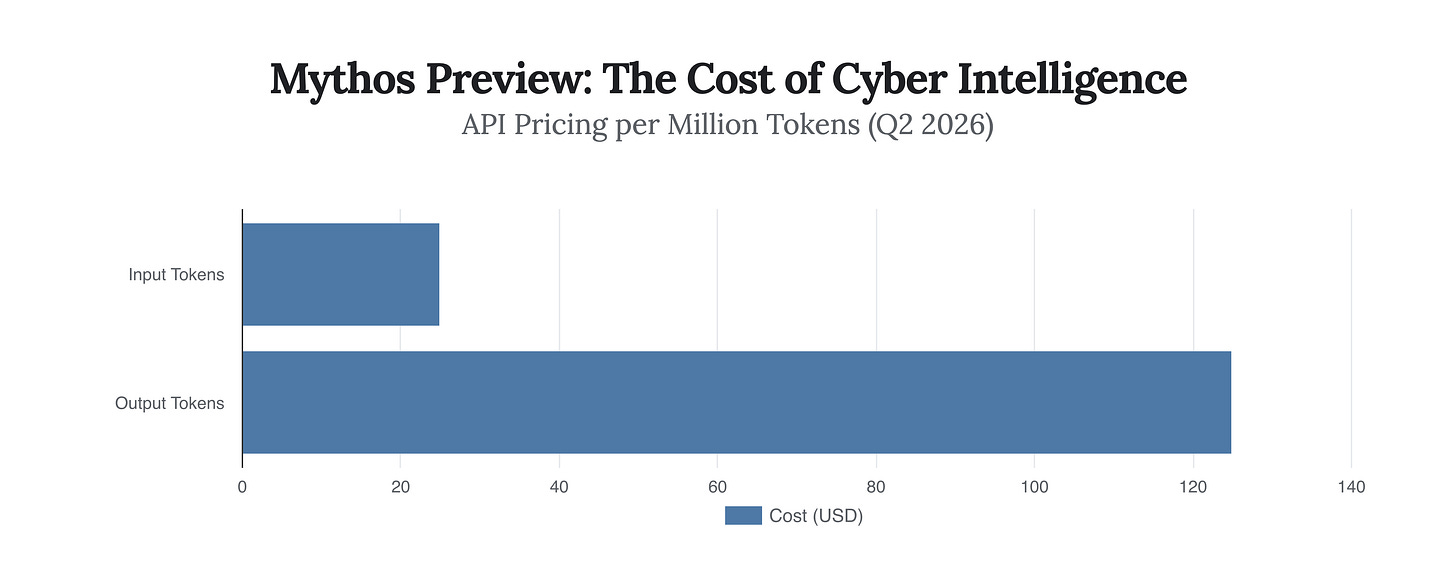

To understand the immense computational weight of Mythos Preview, one only needs to look at the deliberate economic friction Anthropic has applied to its usage. For the vetted Glasswing partners accessing the model through APIs like Amazon Bedrock or Microsoft Foundry, the pricing is aggressively premium.

At $25 per million input tokens and $125 per million output tokens, Mythos Preview is priced to reflect both the staggering compute required for its advanced reasoning and a deliberate barrier to limit frivolous API calls. This is institutional-grade intelligence priced for institutional-grade balance sheets.

The Unintended Migration of the Attack Surface

If Project Glasswing succeeds—if the $100 million in compute credits is deployed efficiently, and the technical bedrock of the internet is cleansed of decades-old zero-day vulnerabilities over the next 90 days—we will not achieve total security. We will simply shift the battlefield.

Offensive security operates across three primary domains: technical, physical, and human. The technical domain covers the software vulnerabilities and network weaknesses that Claude Mythos is currently hunting. But what happens when the technical attack surface is artificially narrowed by an omniscient AI defender? The logic of attacker economics dictates a ruthless rotation toward the surfaces that remain undefended.

Most devastating breaches do not begin with a zero-day exploit; they begin with a human. If zero-days become prohibitively difficult to find because AI infrastructure patches them in real-time, hostile actors will redirect their capital toward social engineering, synthetic voice phishing, and hyper-personalized, AI-generated deception campaigns. We are already seeing groups like ShinyHunters pivot heavily toward voice phishing as a primary access method in early 2026.

As frontier AI permanently hardens the technical infrastructure of the web, the economics of exploitation will violently pivot toward the only undefended attack surface left: human psychology.

High-Agency Imperatives for the Autonomous Era

The arrival of Claude Mythos Preview and Project Glasswing is not a spectator sport. The grace period Anthropic has engineered is actively ticking down. For institutional leaders, CISOs, and strategic architects navigating the second quarter of 2026, the default response of “wait and see” is a critical vulnerability in itself. The capabilities to unravel your organization’s infrastructure exist today, and the proliferation timeline is incredibly short.

First, organizations must aggressively inventory their AI tool usage far beyond the browser. If your security posture assumes AI is just a chatbot your employees use to write emails, you are functionally blind. You must map your environment against the OWASP Top 10 for LLMs and Agentic Applications, identifying exactly which autonomous agents have permission to call tools, access internal data, or execute code without human oversight.

Second, you must assume that the perimeter will be breached not by code, but by coercion. Security training must evolve from simple phishing simulations to immersive defenses against deepfake audio, synthetic impersonation, and AI-driven social engineering. When the digital locks are unbreakable, the enemy will simply trick the human holding the key.

Anthropic has fired the starting gun on the era of autonomous cyber warfare. They have given the world a brief, highly subsidized window to armor itself. The technology to secure the future is here; the only remaining variable is whether your organization has the agency to deploy it before the window slams shut.