The rumor mill in Silicon Valley has shifted from whispering about AGI timelines to shouting about solvency. The question circulating through the boardrooms of Davos this week is blunt: Will OpenAI go bankrupt?

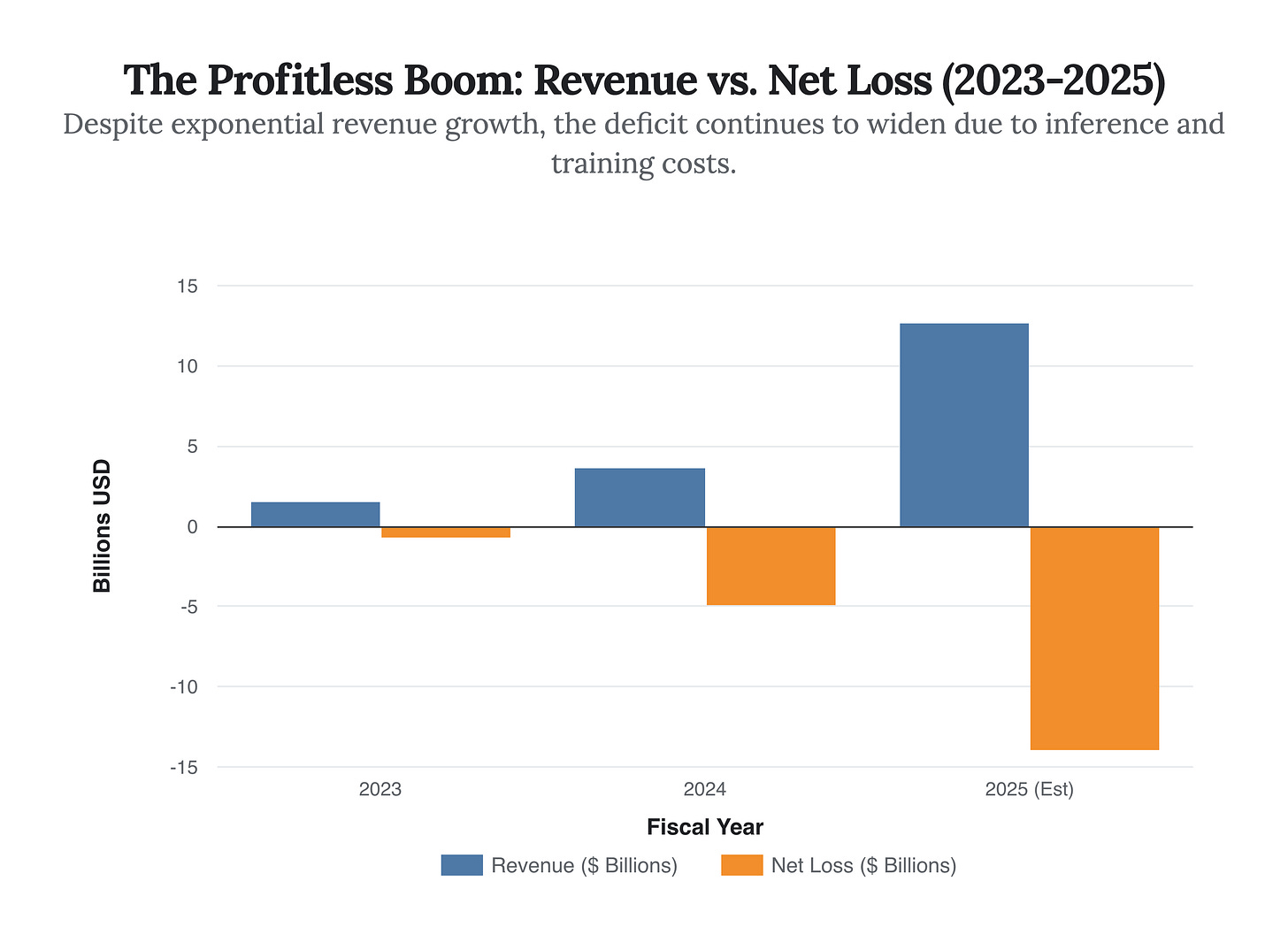

To the untrained eye, the financials look catastrophic. Entering 2026, the company has reportedly posted a net loss exceeding $14 billion for the fiscal year 2025, despite revenue skyrocketing to nearly $13 billion. In any traditional industry—SaaS, manufacturing, consumer goods—spending $3 to earn $1 is a death spiral. But to view OpenAI through the lens of a traditional software company is a category error.

OpenAI is not going bankrupt in the solvency sense. The recent capital injections, valuing the entity at over $300 billion, have secured its runway through 2027. However, the company is suffering from a different, perhaps more fatal condition: The Capital Trap. It has successfully transitioned from a research lab to a utility, but in doing so, it has traded high-margin software economics for the brutal, capital-intensive thermodynamics of an energy supermajor.

This briefing deconstructs the financial reality of OpenAI, the erosion of its technological monopoly by Anthropic, and why the “bankruptcy” risk isn’t about running out of cash—it’s about running out of reasoning for the valuation.

The Thermodynamics of Burn: Analyzing the Deficit

The most critical strategic development of 2025 was the decoupling of revenue growth from margin expansion. In the classic software playbook, once you cover your fixed engineering costs, every additional user is pure profit. OpenAI has inverted this physics. Every additional user requires linear—and sometimes exponential—compute resources.

Our analysis of the leaked financials and investor updates from Q4 2025 reveals a stark trajectory. While revenue grew nearly 250% year-over-year, operational costs (driven primarily by Microsoft Azure compute and Nvidia hardware acquisition) grew in lockstep.

The chart above illustrates the core problem: Unit Economics. In 2025, for every $1 of revenue generated, OpenAI spent approximately $2.20. This is not “blitzscaling” in the Reid Hoffman sense, where you spend on marketing to acquire a sticky user. This is structural cost. The majority of this spend is not R&D; it is the cost of goods sold (COGS)—specifically, inference. The electricity and silicon required to run the models are eating the company alive.

The “Good Enough” Threshold

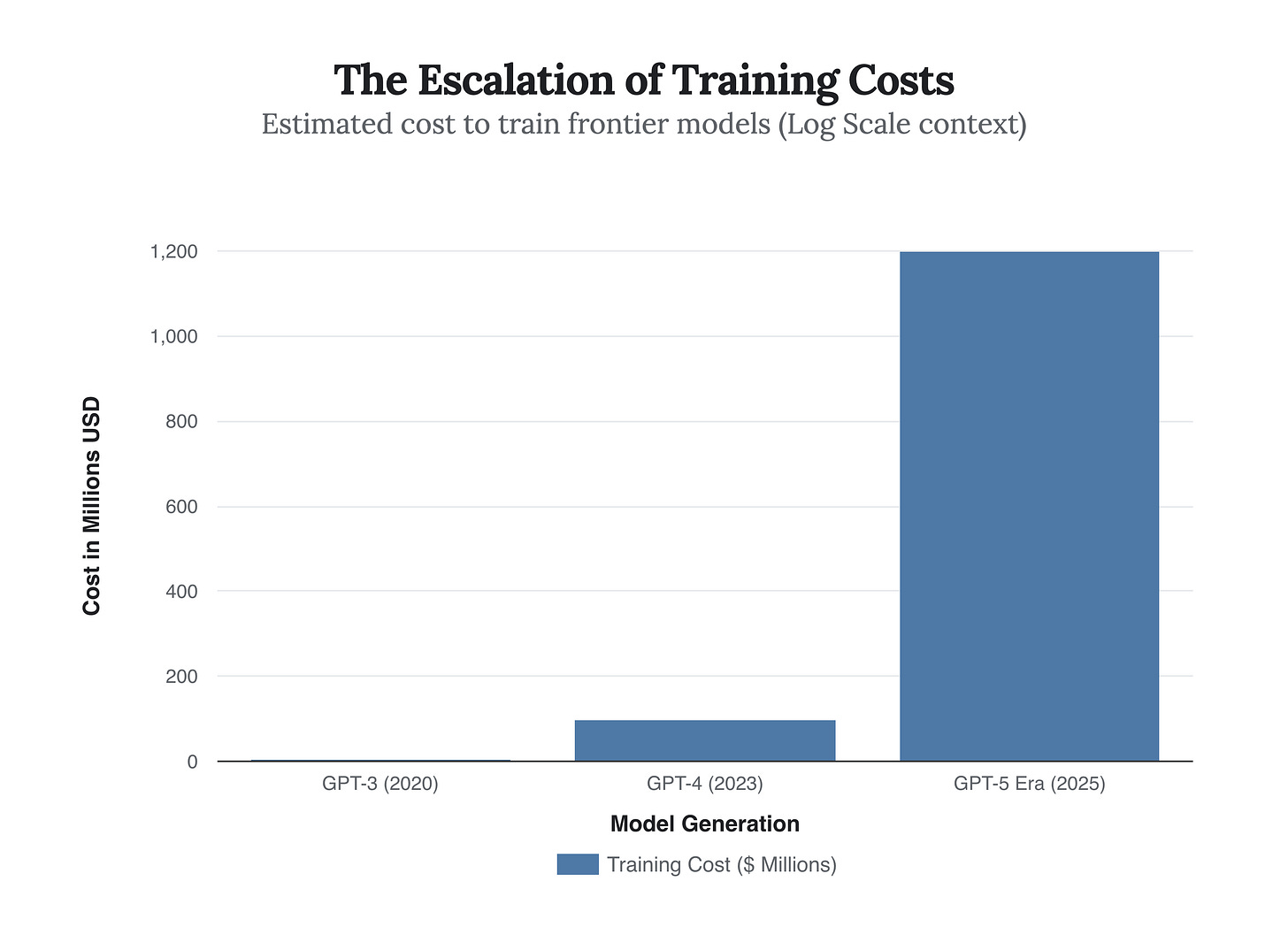

The strategic crisis is exacerbated by the commoditization of intelligence. In 2023, GPT-4 was a Ferrari in a world of bicycles. Today, it is a Ferrari in a traffic jam of Lamborghinis. By late 2025, the cost to train a frontier model hit the $1 billion mark, yet the performance delta between OpenAI’s models and open-source alternatives like Meta’s Llama series narrowed to a vanishing point for 90% of use cases.

This escalating cost structure creates a “moat of money,” but it works both ways. It prevents startups from entering the training game, but it locks OpenAI into a cycle where they must raise massive capital rounds just to stay in the same place relative to the competition.