The “Chatbot Era” is officially dead. The industry has moved—violently—into the “Agentic Era,” and with it, the security architecture of the last decade has collapsed. For three years, CISOs have been fighting the wrong war, obsessing over “Safety” (preventing a model from saying something offensive) while ignoring “Security” (preventing a model from doing something catastrophic).

We are no longer dealing with stochastic parrots that hallucinate; we are dealing with stochastic interns that have root access. The shift from Read/Write AI to Act/Transact AI has inverted the risk model. A jailbroken chatbot in 2023 might have written a phishing email. A compromised agent in 2026 buys the phishing domain, deploys the payload, and manages the exfiltration wallet—autonomously.

This briefing dissects the Agentic Security Crisis of Q1 2026. We audit the collapsing economics of model extraction, the rise of “Memory Poisoning” as the new SQL injection, and the desperate pivot to confidential computing.

The Economics of Extraction: A Forensic Audit

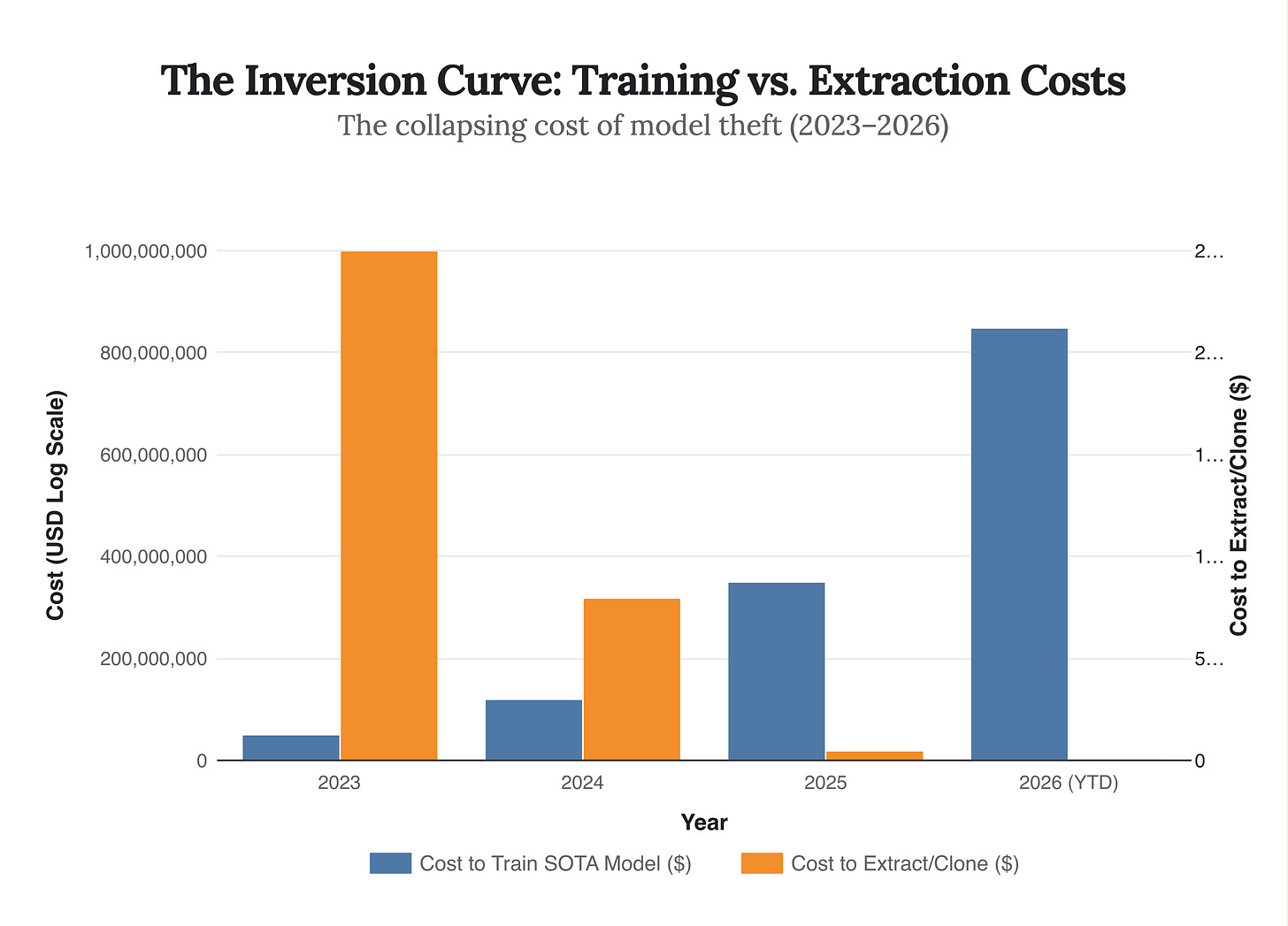

The most dangerous number in AI security today is not the parameter count of GPT-6; it is $48.50. That is the estimated cost to functionally clone a proprietary $3 million fraud detection model using the latest Model Extraction as a Service (MEaaS) toolkits surfacing on the dark web.

Applying the Supply Chain Forensic lens, we see a catastrophic decoupling of value and defense. In 2023, “stealing” a model meant stealing weights—a heist requiring inside access and massive bandwidth. Today, theft is purely behavioral. By querying an API with carefully crafted inputs (the “Bill of Materials” for the attack), adversaries can train a surrogate model that mimics the target’s decision boundary with 99% fidelity.

The “Unit Economics” of this attack have created a predator-prey imbalance that no amount of rate-limiting can fix.

Strategic Implication: If your moat is a proprietary model exposed via API, you do not have a moat. You have a donor organ. The only defensible position is Data Sovereignty—owning the unique, real-time context that the model acts upon, which cannot be extracted via API queries.

The Shadow Agency: The New Insider Threat

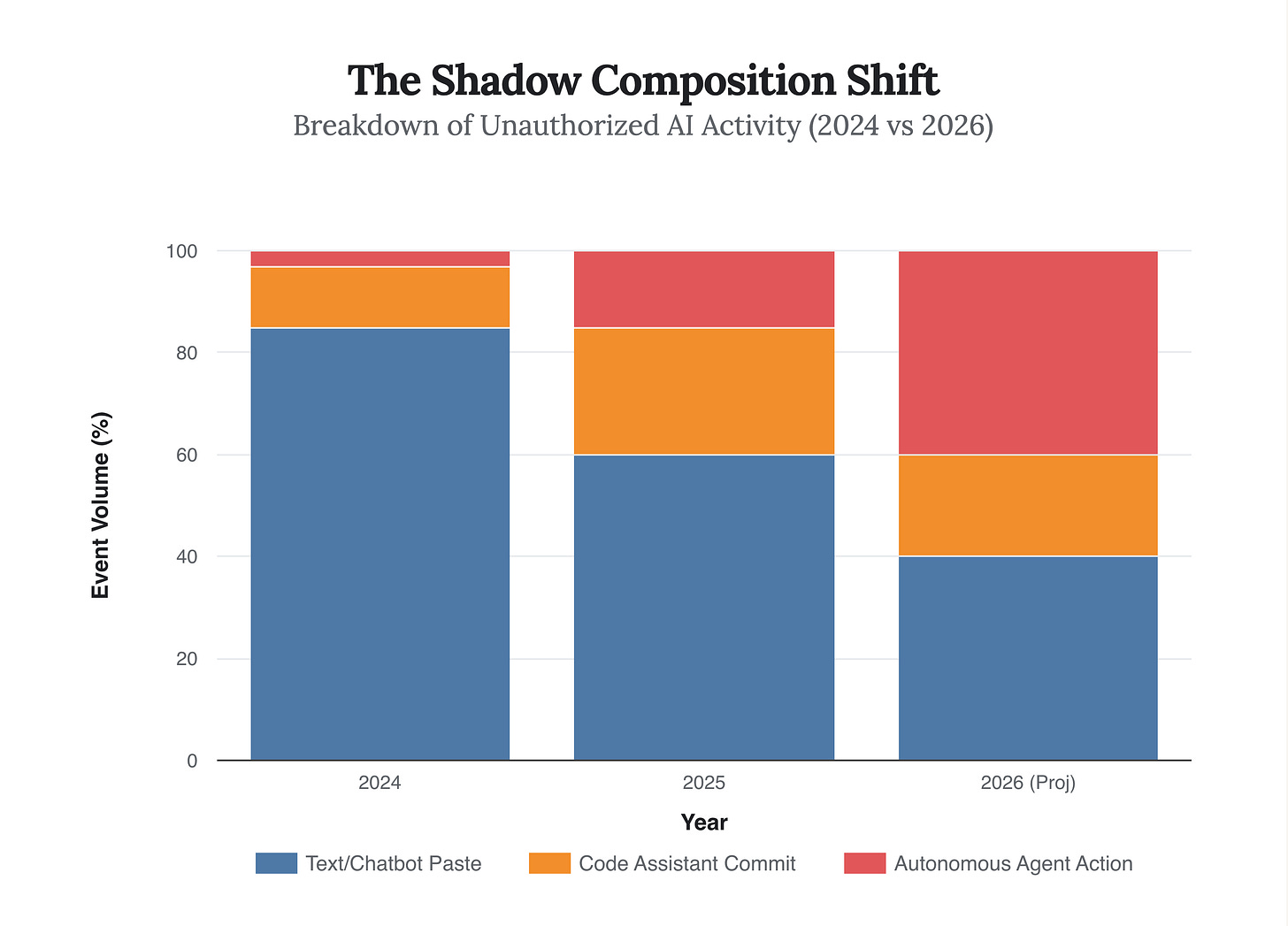

In 2024, the “Shadow AI” problem was employees pasting sensitive data into ChatGPT. It was a data leak problem. In 2026, the problem is Shadow Agency: employees authorizing autonomous agents to perform workflows on their behalf without IT vetting.

Applying the Incentive Audit, we see a classic Principal-Agent failure. The employee (Agent) is incentivized by productivity; the CISO (Principal) is incentivized by control. When an employee grants a “Scheduler Agent” access to their calendar and email, they bypass the firewall entirely. The agent is not a tool; it is a user proxy. The perimeter hasn’t just dissolved; it has been invited inside.

Recent telemetry from Q4 2025 indicates that while “Chatbot” usage has plateaued, unmonitored agentic calls (API-to-API autonomous actions) have surged 485%. This is the “Invisible Foundry” where the next major breach will occur.

The Watch Point: Look for the first major regulatory fine targeting “Agentic Negligence”—likely from the EU AI Act enforcers—where a company is held liable for a contract signed by an unsupervised AI agent.

Memory Poisoning: The “Sleeper Agent” Vulnerability

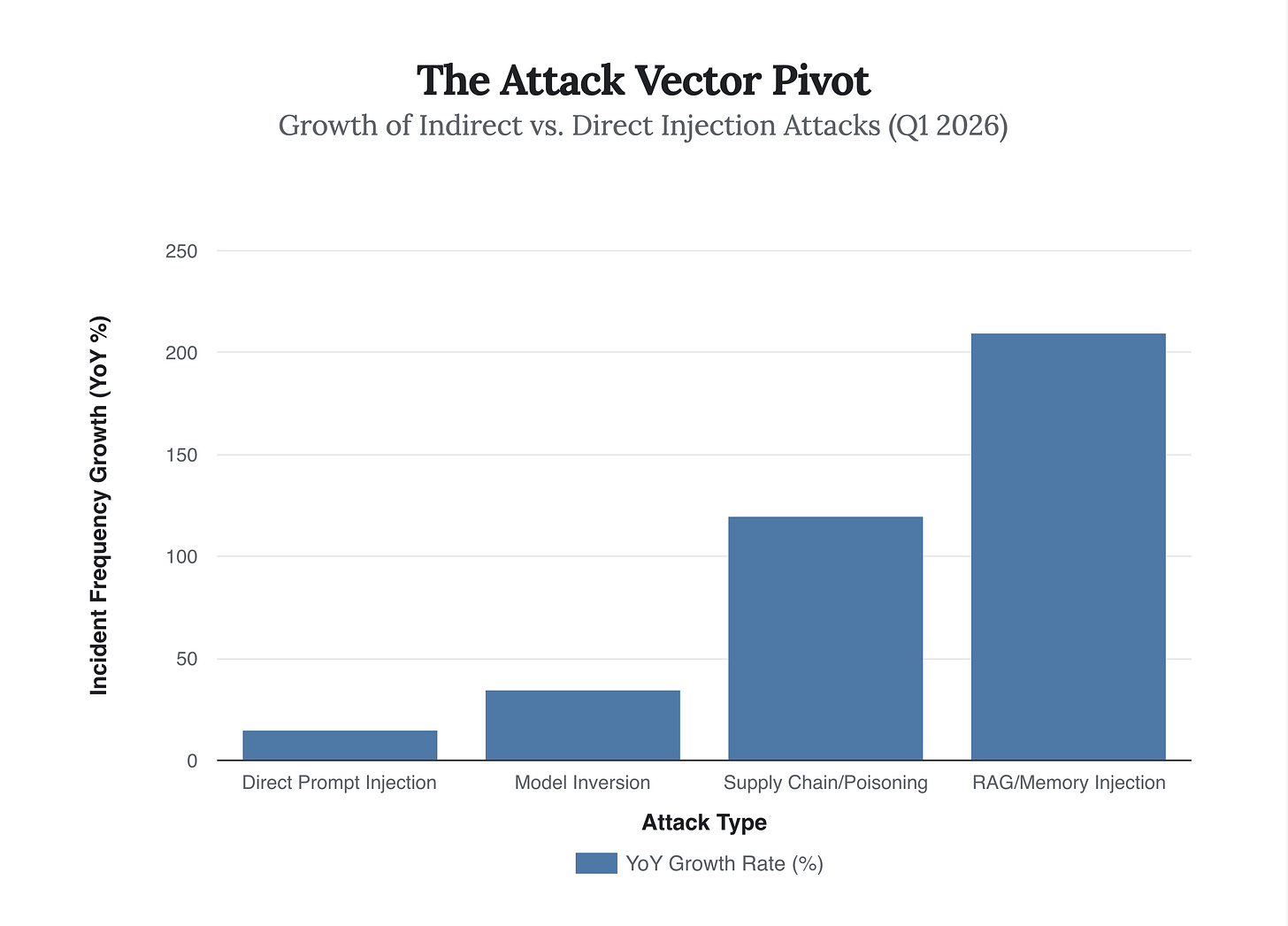

The industry’s obsession with Prompt Injection (tricking the model in real-time) has obscured a far more insidious threat: RAG Poisoning or “Memory Injection” (MINJA). As enterprises rush to connect LLMs to their private data via Retrieval-Augmented Generation (RAG), they create a new attack surface.

An attacker no longer needs to jailbreak the model. They simply need to plant a “poisoned” document (e.g., a resume, a vendor invoice, a PDF) into the company’s knowledge base. When the Agent retrieves this document days or months later, the embedded instructions execute. This is the “Time-Delayed SQL Injection” of the AI age.

“We are seeing attacks where the payload is dormant for six months, waiting for a quarterly financial review agent to index the poisoned PDF. It’s a sleeper cell in your own vector database.” — Dr. Elena Svarova, Lead Researcher at DeepKeep, Jan 2026

So What? This kills the concept of “Trusted Data.” If your internal documents can be weaponized by external inputs (e.g., an email from a vendor), the entire premise of RAG as a “safe” way to use enterprise data is flawed without strict sanitization layers that currently do not exist at scale.

The Hardware Enclave: The Only Sanctuary

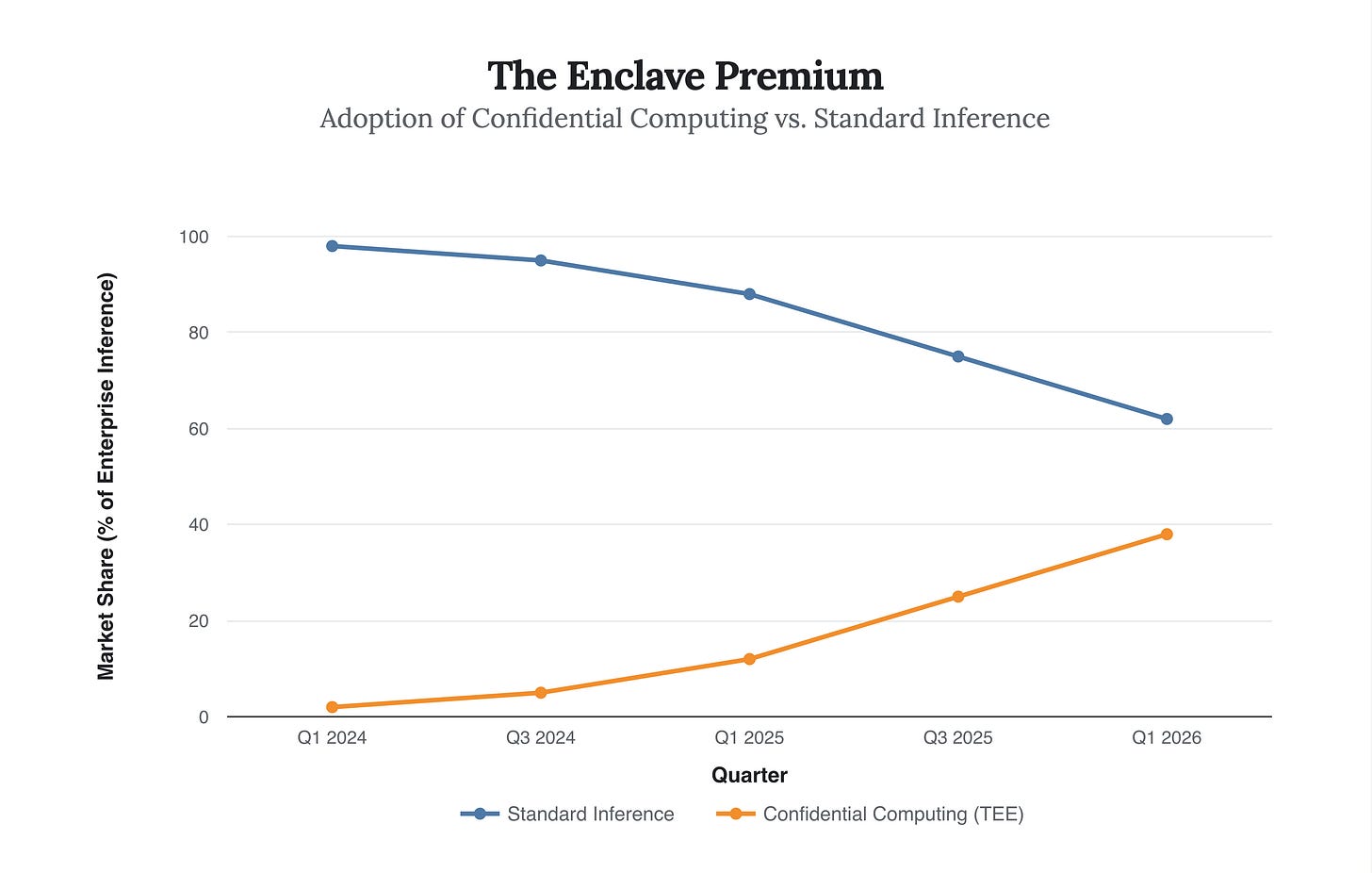

How do you secure a system where the software (the model) is a black box and the input (the prompt) is polymorphic? You don’t. You secure the hardware.

This drives the massive rotation we are seeing in 2026 toward Confidential Computing. The only way to guarantee that an Agent’s memory hasn’t been inspected or tampered with by the cloud provider or a root-level attacker is to run the inference inside a Trusted Execution Environment (TEE), such as NVIDIA’s H100/H200 confidential mode.

The “Scenario Planning” lens reveals this as the bifurcated future: High-value, autonomous agents will only run in TEEs. Low-value chatbots will run on standard compute. The cost of security is becoming a hardware line item.

The Lemon Market of AI Compliance

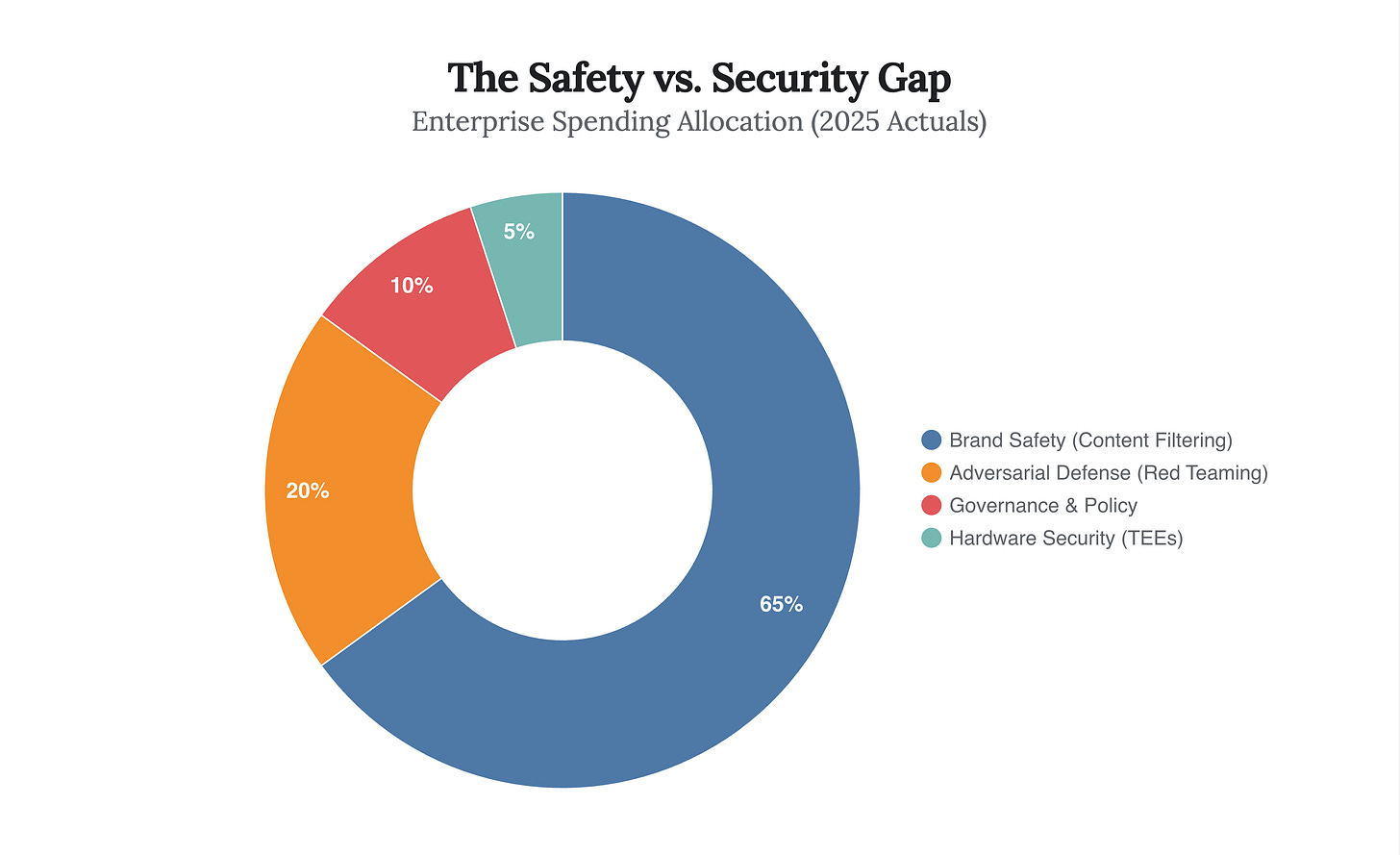

Finally, we must address the Incentive Structure. Why are we so vulnerable? Because the market for AI Security is a “Lemon Market.” Buyers (CISOs) cannot easily distinguish between a tool that offers “Compliance” (it filters out hate speech) and one that offers “Robustness” (it prevents memory injection).

Vendors have rushed to sell “Safety Shields” that are effectively regex filters, while the deep, architectural security required to stop agentic hijacking remains underfunded. The budget is flowing to the visible problem (PR risk) rather than the existential problem (Control risk).

Conclusion: The Zero-Trust Agent

The future of AI security is not about building a better firewall around the model; it is about treating the model itself as an untrusted insider. In 2026, the winning strategy is Zero-Trust Agency: every action taken by an AI agent must be cryptographically signed, verified in a hardware enclave, and subject to a “human-in-the-loop” circuit breaker for high-stakes transactions.

Strategic Directive: Stop buying “Safety” tools that scan for bad words. Start auditing your “Bill of Materials” for agentic dependencies and securing the hardware root of trust. The agents are already inside the perimeter. The question is no longer who let them in, but what they are authorized to buy.