Sartre’s Ghost: Quantifying the Collapse of Agency in the Age of Inference

Jean-Paul Sartre famously declared that we are “condemned to be free.” In his view, existence precedes essence; we arrive as blank slates, forced to constantly invent ourselves through radical, unscripted choice. To Sartre, the only sin was “Bad Faith”—the denial of this freedom by pretending we are fixed objects, defined by our past or our society.

Seventy years later, the industrial logic of Silicon Valley has inverted this maxim. In the AI economy of 2026, essence precedes existence. Before you act, your action is modeled, priced, and sold. The predictive engine does not merely guess what you will do; it curates the environment to ensure the prediction comes true.

This is not a philosophical abstraction. It is a measurable economic reality visible in the CapEx filings of Hyperscalers and the latency logs of H100 clusters. The radical freedom Sartre described is being eroded not by tyranny, but by a simpler, more ruthless force: Variance Suppression. To an algorithm, human agency is “entropy”—noise that degrades the efficiency of the prediction model. And in 2026, the most profitable user is the one with zero entropy.

The Physics of Fate: Why Inference Killed Choice

To understand why your choices are shrinking, you must look at the supply chain of the hardware that curates them. For the last decade, the narrative focused on Training—the massive, one-time energy cost of teaching a model. But as we entered late 2025, the economic center of gravity shifted violently to Inference—the real-time cost of generating a prediction for a specific user.

The Unit Economics of a Nudge

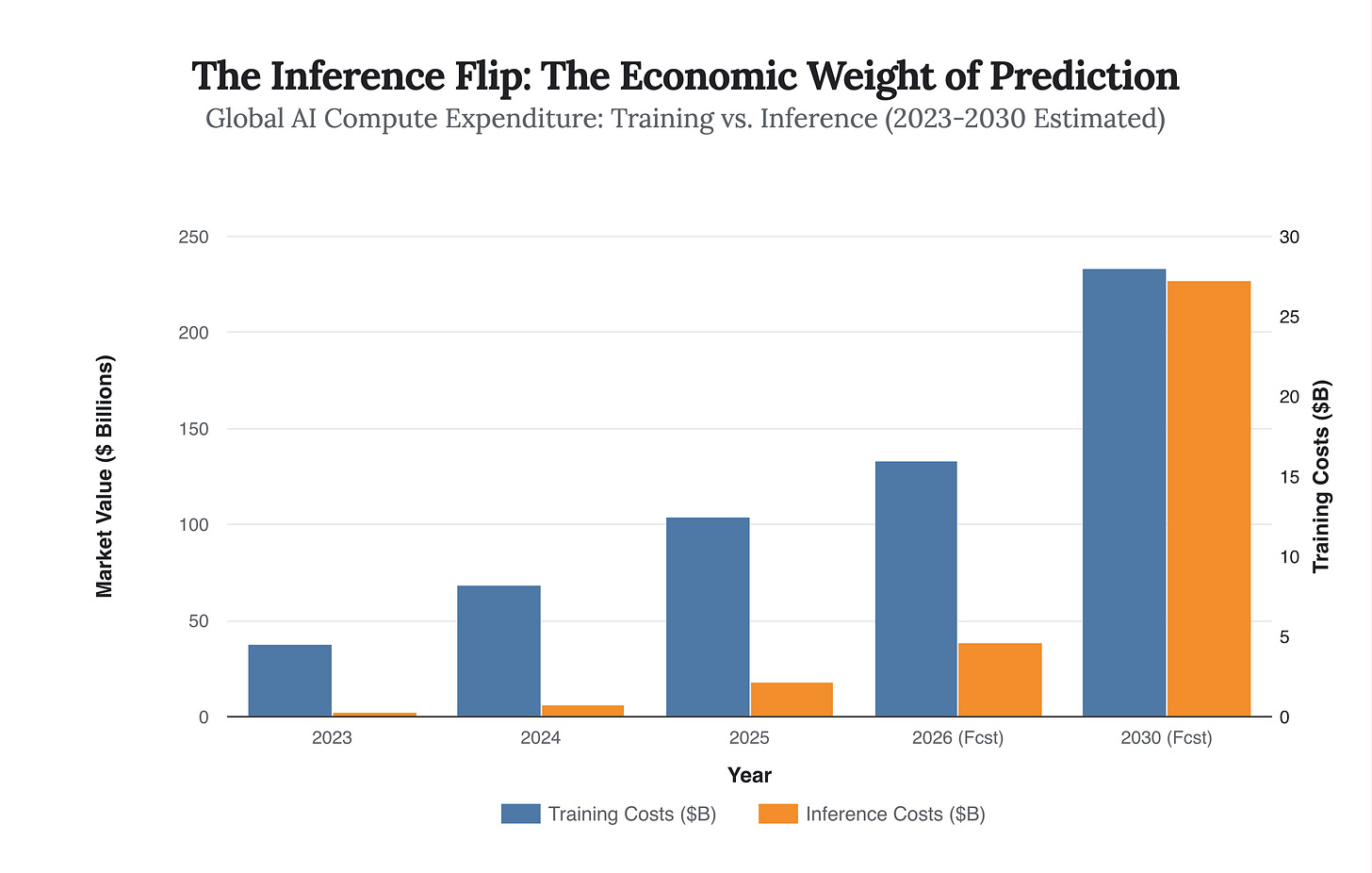

According to late 2025 industry analysis, inference now accounts for over 75% of total AI compute demand, a market projected to hit $255 billion by 2030. Training is a sunk cost; inference is a variable cost that scales with every second of human attention.

Consider the “Bill of Materials” for a single moment of choice on a platform like Instagram or TikTok. Every time a user scrolls, the system must execute a complex inference chain: retrieval, ranking, and behavioral probability scoring. If the user acts unexpectedly (Sartrean freedom), the model fails. The system must then re-calculate, burning expensive GPU cycles. If the user acts predictably, the cache hits, the pre-fetch works, and the margin improves.

The chart above reveals the structural incentive. As inference costs explode (projected to be 15x higher than training costs over a model’s life), platforms are financially mandated to minimize the “compute cost per user.” The cheapest way to lower compute cost is to lower User Variance. A predictable user requires fewer parameters to model and fewer GPU cycles to retain.